Local LLM on Industrial Raspberry Pi

As we experienced in 2023, one of the trends was LLM (Large Language Model) like ChatGPT. Not only just using chat UI, but also using the ChatGPT API, there are a lot of products and services that realize new functionalities in IT systems. On the other hand, in OT systems such as factory automation, it is difficult to integrate ChatGPT API into existing systems because typical OT systems tend to be under a closed network without an Internet connection.

The closed network is very secure, but it requires other solutions to use LLM. Recently, various companies and organizations have released their unique LLM that has learned domain-specific knowledge. By using these LLM, we can adapt the LLM in the OT field.

In this tutorial, I used TinyLlama, a light weight LLM model released by the Singapore University of Technology and Design. For the existing edge device, I used the reTerminal DM, an industrial Raspberry Pi with a touch display. Using TinyLlama and reTerminal DM, I introduce how to implement chat UI for novice factory engineers to query OT domain knowledge.

Updating Raspberry Pi OS in reTerminal DM

My reTerminal DM already has the Raspberry Pi OS in the eMMC storage. But to update the OS to the latest (Raspberry Pi OS (64-bit) with Desktop, 2023–12–05), I follow the following official document as a first step.

https://wiki.seeedstudio.com/reterminal-dm-flash-OS/

The next step is to open the terminal application from the Raspberry Pi OS menu. To download the TinyLlama model from the Hugging Face website, run the following wget command in the terminal. This operation should be in your home directory, “/home/pi”.

wget https://huggingface.co/TheBloke/TinyLlama-1.1B-Chat-v1.0-GGUF/resolve/main/tinyllama-1.1b-chat-v1.0.Q4_K_M.gguf

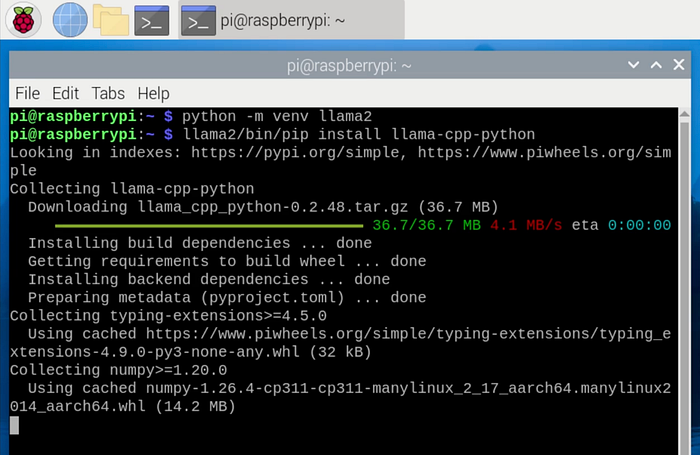

As the Raspberry Pi OS has a Python environment, you can use the python and pip commands by default. Install the `llama-cpp-python` module which is needed in the Python program to call the model.

python -m venv llama2

llama2/bin/pip install llama-cpp-python

Then, in a text editor such as `vi`, create a file `llama2.py` that contains the following Python code.

import sys

from llama_cpp import Llama

llm = Llama(model_path="tinyllama-1.1b-chat-v1.0.Q4_K_M.gguf", verbose=False)

output = llm("<user>\n" + sys.argv[1] + "\n<assistant>\n", max_tokens=40)

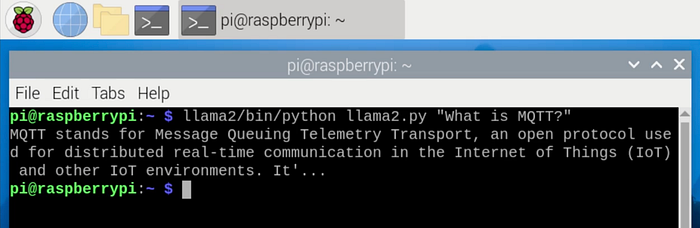

print(output['choices'][0]["text"] + "...")This Python code loads the TinyLlama model using the `llama_cpp` module and generates the response text from the prompt received via the command argument. To limit the maximum length of the response text to 40 characters, the code specifies the `max_tokens=40` in the llm() function. To check the behavior of the Python program, run the following command.

llama2/bin/python llama2.py "What is MQTT?"The answer text will be displayed in the terminal.

Creating Chat UI for reTerminal DM Screen

Since the command line operations are not user-friendly, I will create the chat UI using Node-RED. To install Node-RED, run the following install script on the terminal.

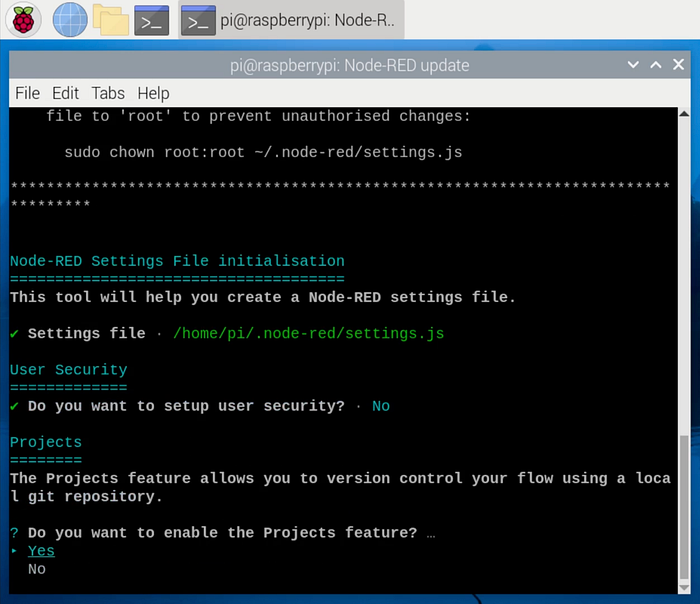

bash <(curl -sL https://raw.githubusercontent.com/node-red/linux-installers/master/deb/update-nodejs-and-nodered)When the configuration wizard asks “Do you want to enable the Project feature?”, select “Yes” to enable the feature to download the Node-RED program (flow) from the GitHub repository.

After applying the automatic startup with the following command, restart Raspberry Pi.

sudo systemctl enable nodered.service

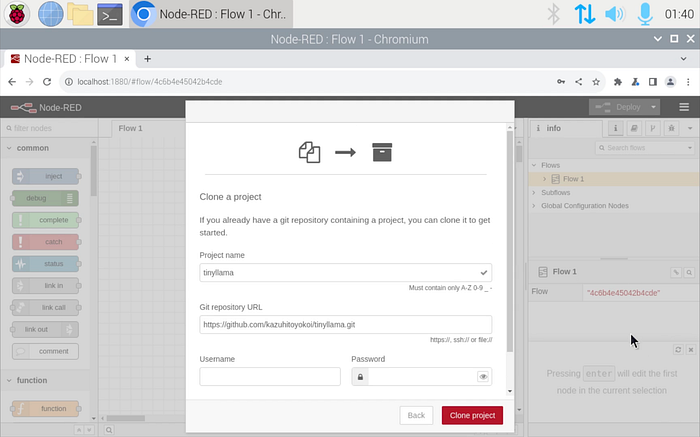

sudo rebootOnce the Raspberry Pi desktop has reappeared, open the Node-RED flow editor, http://localhost:1880 in the Chromium browser. In the first step of the wizard, click the “Clone Repository” button to download the Node-RED flow from the GitHub repository.

After the user configuration, paste “https://github.com/kazuhitoyokoi/tinyllama.git" into the Git repository URL field.

The imported flow will have some unknown node components due to the lack of the required module.

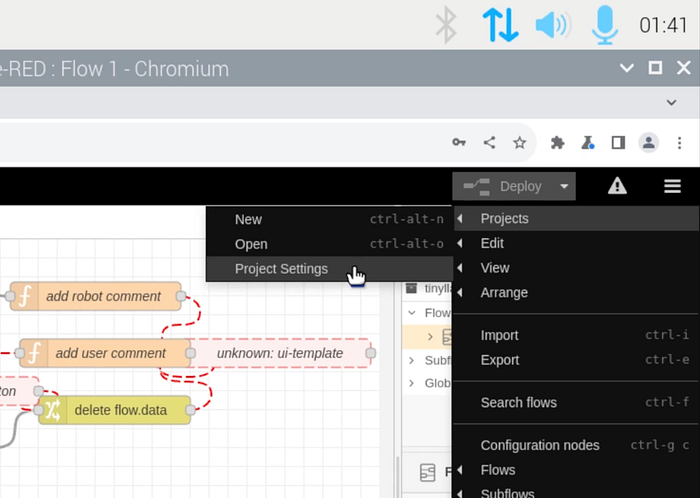

To solve the situation, select Menu -> Project -> Project Settings and click the install button of the `@flowfuse/node-red-dashboard` in the Dependencies tab.

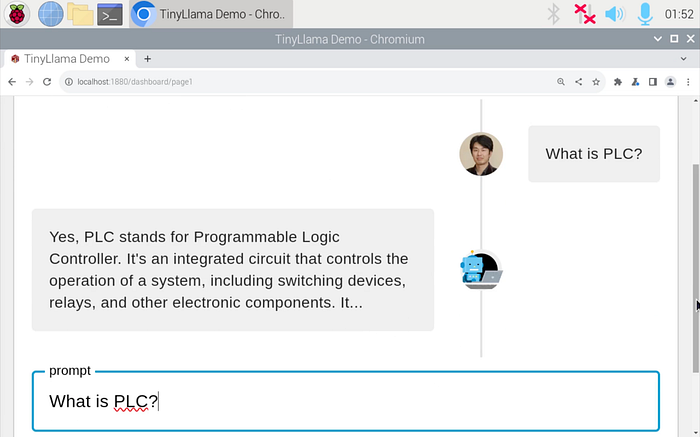

Now the flow can be executed. To open the chat UI, select the “Dashboard 2.0” tab on the right sidebar and then click the “Open Dashboard” button.

In the chat UI, try typing questions. The LLM will respond to your questions.

Of course, it correctly runs without an Internet connection.

Summary

In this tutorial, I showed how to create a chat UI to query OT domain knowledge using TinyLlama and reTerminal DM. This chat UI is an example of how factory engineers can utilize the LLM created by leading companies and organizations. They may continue to release versatile LLM models to realize IT/OT convergence in the near future. We need to use these technologies to make factories modern.